Market Battlegrounds: Capital, Contracts, and Developer Loyalty

The intense, ongoing competition between the leading AI firms—the builders of the foundational models—is playing out not just in white papers and model releases, but in strategic corporate maneuvers aimed at securing market share and, crucially, the loyalty of the developer community itself, who remain the ultimate platform gatekeepers.

The Enterprise Spending Wars and Market Share Dynamics

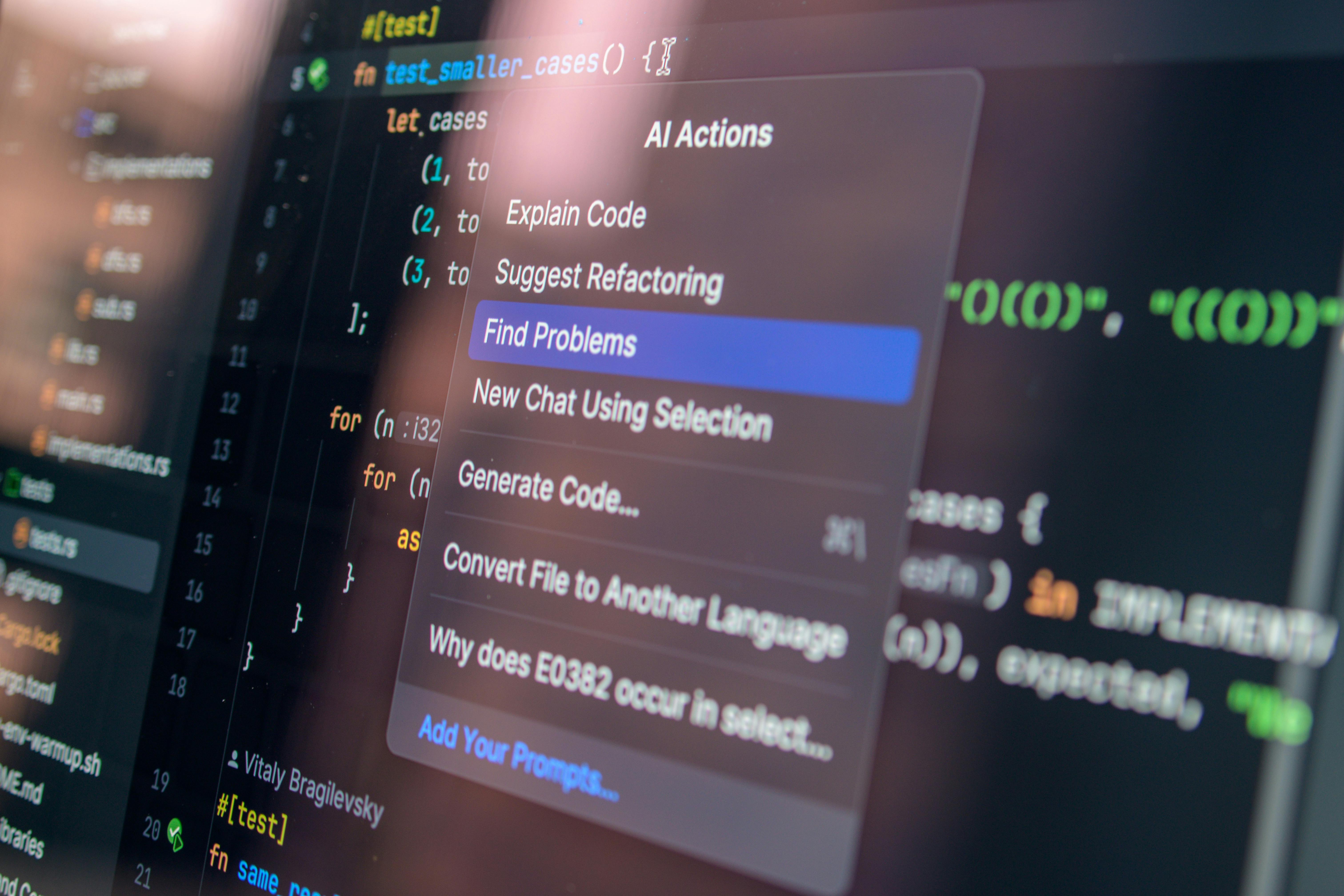

The financial battle for dominance in the enterprise AI segment has been particularly fierce throughout two thousand twenty-five, a battle that continues into 2026. While one company might lead in overall usage metrics based on their consumer-facing tools, the critical, high-stakes battle is fought over actual B2B expenditure and the allocation of **production-oriented workloads**. Reports from the end of last year indicated significant shifts, with one major player capturing a commanding share of enterprise *spending*, moving ahead of the incumbent that previously dominated both raw usage figures and top-line revenue. This suggests that while many organizations are experimenting widely with various tools, the high-value, mission-critical development tasks are increasingly being allocated to the models that demonstrate superior performance and reliability in those rigorous settings. This struggle also manifests in public displays, including increasingly pointed advertising campaigns designed to win developer sentiment by subtly critiquing the operational decisions or perceived shortcomings of their rivals, turning what should be a technical evaluation into a public relations contest for the trust of the builder class. The focus is shifting from *what* the model can do to *how reliably* it integrates into existing development environments.

Negotiating the New Terms: Data Portability and Lock-in Factors. Find out more about quantifying developer output velocity with AI agents.

For organizations making the significant, long-term commitment to these powerful, proprietary AI code-generation systems, the specter of vendor lock-in has become a primary concern in contract negotiations. The very ease of use that draws development teams in often stems from deep, provider-specific integrations—using custom APIs, proprietary data formats, or unique agent protocols that are hard to untangle. Forward-thinking engineering leaders are now dedicating significant legal and technical resources to contract discussions focused on mechanisms to mitigate this future dependency. Key negotiation points now explicitly revolve around:

- Guaranteed access to all exported training data, or the derived knowledge base, in open, non-proprietary formats.

- Clear service continuity and model portability clauses, especially concerning custom fine-tuning.

- Explicit, ironclad guarantees regarding the ownership of the source code generated *during* the contract period.. Find out more about self-reported productivity gains from Anthropic agents guide.

The technical effort required to migrate away from a shallow API integration might be measured in a matter of days, but escaping a deep integration involving fine-tuned models and complex contextual embeddings can require weeks or even months of specialized engineering effort. This cost imbalance makes these contractual safeguards absolutely vital for maintaining long-term strategic flexibility. Avoiding vendor lock-in is now a top-tier concern for CTOs, right alongside security and budget adherence.

The Language of Creation: English as the Highest Abstraction Layer

Perhaps the most profound, philosophical shift in the coding revolution is the elevation of natural language—specifically, expressive English—to the status of a primary programming interface. The industry is moving toward a world where the instruction set for software creation is predominantly written in plain, expressive language rather than in the rigid, unforgiving syntax of a formal programming language.

From Syntax Mastery to Intent Specification

The technical evolution has added an entirely new, highly effective layer atop the existing stack. Where previous layers, such as the move from assembly to C, or from C to Python, made programming more accessible by abstracting away machine details, the current layer introduces natural language as the primary means of conveying high-level architecture and functional requirements. This transition means that success is less about recalling precise syntax rules for Python or Java and more about the clarity and completeness of one’s *intent specification*. The developer transforms: they become less of a translator between an abstract idea and machine code, and more of a domain expert articulating a desired outcome to a highly capable, yet extremely literal-minded, executor. This has profound implications for education, shifting the focus from memorizing language constructs to mastering precision in communication and understanding the subtle implications of word choice when describing system behavior. The core skill is now one of rigorous, unambiguous articulation. This mirrors an older, pre-computer concept—the clear definition of requirements—but at a thousand times the speed and complexity. For insight into how this abstraction works, one can look at the conceptual frameworks underpinning modern LLM reasoning.

The Role of Prompt Engineering in System Validation. Find out more about concerns over foundational coding competency erosion tips.

This reliance on natural language makes prompt engineering the truly critical skill for ensuring correctness and adherence to quality standards. The prompt is no longer just a query; it is the specification document, the contract, and the initial test case combined. Effective prompt design in this environment requires not just suggestive phrasing, but a deep, almost psychological understanding of how the underlying models interpret ambiguity and internal bias. Developers must engineer prompts that robustly constrain the AI’s solution space, explicitly detailing edge cases, security requirements, and performance expectations in a way that leaves zero room for misinterpretation. The ability to critically review an AI-generated solution and then iterate the natural language instruction—tweaking a single adjective or clause to force the model to produce a superior or more secure implementation—is the new form of debugging. This replaces the step-by-step inspection of running code with the iterative refinement of conceptual instruction. This new form of troubleshooting is a powerful new skill set, essential for maintaining code quality in an AI-assisted world.

Foreshadowing the Near Future: The Industry’s Unavoidable Trajectory

As the year two thousand twenty-five closed and we move deeper into 2026, the trajectory set by the leading AI labs suggests a future where the role of the human in the loop is irrevocably altered, forcing structural changes across education, hiring, and technological philosophy. The debate has largely settled: the technology is here to stay, and its influence will only deepen across every vertical.

The Shrinking Role of Entry-Level Coding Positions

One of the most immediate and concerning consequences of this velocity shift is the pronounced contraction of traditional entry-level roles. If junior developers were historically valued for their ability to handle the high-volume, lower-complexity tasks—the boilerplate code, the routine CRUD operations, the basic unit tests—and these are precisely the tasks AI agents now perform with maximum efficiency and reliability, the traditional on-ramp to the profession is disappearing. Companies are already adjusting their hiring strategies, indicating a clear preference for senior engineers capable of architecting, supervising, and auditing AI-driven output over a large cohort of trainees who would spend most of their time writing code that an agent could generate instantaneously. This necessitates a radical rethinking of computer science curricula across universities and bootcamps. Education must pivot away from teaching coding as a primary, marketable skill toward teaching essential AI literacy, rigorous system validation, and high-level design principles to ensure a pipeline of qualified future architects, rather than merely writers of code. This transformation presents a significant challenge to traditional academic structures, which are notoriously slow to adapt to seismic shifts like this one.

The Long-Term Imperative: Utility Over Brute Force Scale. Find out more about emergence of the full-stack AI collaborator engineer strategies.

Looking beyond the immediate market capture battle fueled by massive capital infusions, the long-term victory seems destined for the entity or approach that demonstrates superior *utility* in the most critical domain: producing demonstrably high-quality, secure code generation. The philosophical divide between the two leading AI paradigms suggests a divergence: one might become the ubiquitous, general-purpose utility platform—rich, essential for mundane, high-volume tasks, yet perhaps technologically outpaced in specialized, cutting-edge areas—while the other might secure the intense loyalty of the core innovation engine—the elite developers building the next generation of complex, robust systems. The ultimate measure of success will be whether sheer market capitalization and existing distribution can sustain a platform, or if the sustained, measurable improvement in code quality, precision, and workflow efficiency offered by a competitor will eventually drain the developer allegiance away. In the age of intelligence augmentation, utility, security, and developer trust will ultimately supersede the sheer gravitational pull of existing market size. The code-centric developer of the past is fading, replaced by the system-fluent AI collaborator—a transformation ignited by the escalating capabilities of models from major labs and now cemented by operational realities on the ground.

Actionable Takeaways for the AI-Native Engineer (February 2026)

The goal is no longer to compete *against* the AI but to orchestrate it better than anyone else. Here are three non-negotiable actions every developer must take right now to thrive in this accelerated environment:

- Master Verification and Testing Frameworks: Since AI is writing the code, your primary value is *proving* it works correctly and securely. Dedicate 50% of your learning time to advanced testing methodologies, formal verification tools, and adversarial testing techniques. Your new job title is the “Chief Trust Officer” for your AI-generated output.

- Deepen Your “Why”: Identify the one core domain (e.g., low-latency networking, complex state management, distributed consensus) where you want to maintain *deep* human intuition. Use AI agents to handle all the boilerplate in that domain, but spend the time saved actually reading the underlying specifications and proof-of-concept academic papers. This human-retained intuition will be the invaluable safety net when the AI fails on novel problems.. Find out more about Quantifying developer output velocity with AI agents overview.

- Become an Expert Orchestrator: Move beyond single-turn prompts. Learn to chain AI agents together, assigning roles, managing state between them, and building multi-step automated workflows. Understanding agentic design patterns is the new **software architecture**. For insights on how to manage these complex systems, look into frameworks that track **AI agent performance** across long-running tasks.

Where Do We Go From Here?

The speed of change is dizzying, but the path forward is clear: the future of engineering belongs to the human who can wield the machine most effectively. The most critical question remaining is not about the technology itself, but about the human adaptation required. What foundational skill do you believe will become utterly obsolete in the next five years, and what new skill do you think will command the highest premium for engineers by 2030? Let us know your predictions in the comments below. This conversation is far from over, and your insights are vital to mapping the new terrain of software creation. ***

Further Reading on Related Industry Shifts:. Find out more about Self-reported productivity gains from Anthropic agents definition guide.

- For a deeper look into how AI’s impact is reshaping hiring priorities, check out recent analyses on AI literacy.

- To understand the technical mechanics that allow AI to excel in specific areas, review discussions on LLM reasoning.

- For strategic advice on maintaining flexibility in the face of platform evolution, investigate best practices for managing development environments.

- Explore the next evolution of team collaboration by reading about high-level design principles.

- To see how top firms are tracking the success of these autonomous systems, research the latest reports on AI agent performance.